“I felt like I was trying to swim in an ocean with 60-foot waves crashing down on me,” Trevor Paglen says. The 46-year-old American photographer is speaking over Zoom from a bucolic-looking spot in Northern California. But, at the height of the Covid-19 pandemic, and as he began to prepare his major new exhibition Bloom, he recalls seeing refrigerated trucks housing dead bodies close to his studio in Brooklyn.

“I was trying to figure out what these things mean,” he says. “And I realised: ‘you’re not in control here.’” His new shows, which open at Pace Gallery, London and the Carnegie Museum of Art, Pittsburgh, in September, and then at Officine Grandi Riparazioni, Turin, October, are “about mourning,” he says. Some of the new works created for them were made “under conditions that are completely tragic, where hundreds of thousands of people are dying for no reason”.

“How do you make art in a moment where the meaning of things around us are changing so rapidly,” he says. “I guess my answer to that was going back to allegory."

Paglen recalls feeling “hyper-aware” on his daily walks during lockdown of the visual signifiers of winter turning to spring—the bloom of nature, as humanity remained confined indoors. He started to think about how flowers have featured throughout “other moments in history when meaning itself started to come into question”. Inside, he studied Vanitas and Baroque paintings and endured “a lot of self-questioning”.

Trevor Paglen, Bloom (#836c74), 2020 © Trevor Paglen, courtesy the artist and Pace Gallery

“What’s funny is, if you’d told me 10 years ago I’d be doing a show about flowers—I would have thought you were out of your fucking mind,” he says. “I would have thought flowers are the biggest cliches in the universe. Why would you ever go near that? But flowers have been used over and over again, throughout history, to mean so many different things. That became poignant to me; how they’ve always been allegories for life and fragility and death.”

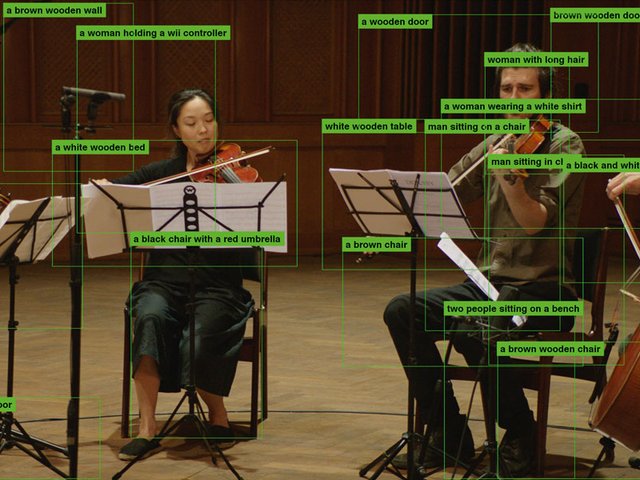

Paglen’s contribution to this long-serving trope is to create flower formations via computer vision algorithms—ones built to analyse real-life photographs. The colours and shapes in Paglen’s images are conceptualised composites the AI has detected in images of flowers. They are not flowers, but what an AI thinks a flower is. They are, therefore, allegories for how AI systems interpret the broad complexities of humanity.

Installation view of Trevor Paglen: Bloom, Pace Gallery, London, 10 September – 10 November 2020 © Trevor Paglen, courtesy the artist and Pace Gallery Photo: Damian Griffiths, courtesy Pace Gallery

Paglen has made a career by visualizing—primarily via the use of photographs, but increasingly with sculpture, installation and VR—the ways in which artificial intelligence algorithms and state-level surveillance are impacting on the minutiae of our most intimate lives. The London exhibition is open for in-person viewing by appointment only, but visitors can also visit it through a web portal created by the artist called Octopus (2020) that shows views from cameras installed in the gallery, and even livestream their own images to monitors hanging on the walls.

Celebrated with the Deutsche Börse prize in 2016 and a recipient of the MacArthur Genius Grant in 2017 for his work Watching the Watchers, on which he worked in collaboration with the journalist Glenn Greenwald and the filmmaker Laura Poitras, Paglen has used photography to explore the meaning of Edward Snowden’s revelations; how private information is stolen, stored and used to co-opt our identities and predict our behaviours. Perhaps most significantly, and especially in light of recent news of state drones being deployed to surveil Black Lives Matter protests in America, Paglen has now turned his attention to how artificial intelligence is used to reinforce and undergird prejudice, racism and misogyny on a state-wide systemic level.

Trevor Paglen, AC, 2020, pigment on textile © Trevor Paglen, courtesy the artist and Pace Gallery

The Bloom exhibition delves into this critical question of our times, exploring how these invisible, omnipresent and increasingly omnipotent apparatus can be liberated from the worst excesses of human divisionism.

“In a lot of technical systems, there’s now an attempt to make algorithms look less biased or more inclusive,” Paglen says. “But, the problem is, you’re substituting a technical idea of diversity for a political idea. Can you make a facial recognition system that isn’t racist? You can make a system that is better at recognising non-white faces. But is it going to make it less racist? Probably not. These technologies are designed to enhance police power and state power. Can you do that in a non-racialised way? No. These are historically racist institutions. We have to look at what kinds of political power and cultural power they are enhancing.”